Most enterprise AI failures are commonly attributed to weak models, insufficient computational infrastructure, unrealistic executive expectations, or poor implementation strategy. Across healthcare, finance, insurance, government, and critical infrastructure sectors, however, a different operational reality is increasingly emerging. Many organizations attempting to deploy AI at scale are discovering that their greatest vulnerability is not the model itself, but the condition of the underlying data environment upon which the system depends.[1]

This realization is arriving later than many executives expected. For the past several years, the AI industry has focused heavily on model capability, benchmark performance, parameter scale, and infrastructure expansion. Vendors have competed aggressively around speed, multimodal capability, automation potential, and generative sophistication. Yet beneath this public narrative sits a quieter and considerably less glamorous challenge; most enterprise environments were never designed to support large-scale machine reasoning across fragmented operational systems.

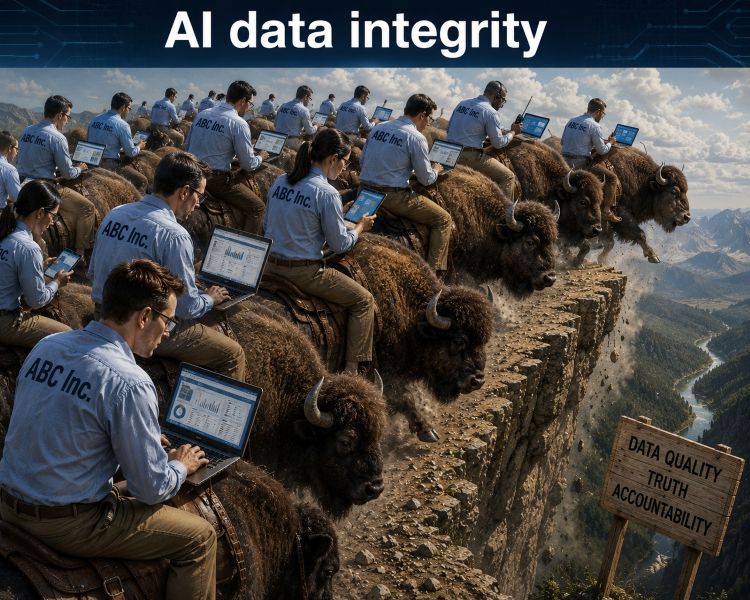

In practice, organizational data environments often resemble layered archaeological structures rather than coherent digital ecosystems. Decades of mergers, departmental software purchases, spreadsheet dependencies, manual workarounds, inconsistent governance standards, and disconnected databases have created deeply fragmented operational architectures. Human operators historically compensated for these weaknesses through institutional memory, contextual judgment, and operational experience. AI systems do not compensate in the same way. Instead, they consume information structures exactly as they exist and amplify whatever inconsistencies, omissions, conflicts, or distortions are present within them.

This is why many organizations are now encountering a difficult operational truth: AI does not merely reveal informational weaknesses. It operationalizes them.

The myth of enterprise AI readiness

The term “AI-ready” has become common in boardrooms, vendor presentations, and technology strategy discussions. In many cases, however, the phrase lacks operational meaning. Most organizations are not meaningfully prepared for enterprise-scale AI deployment because they do not possess sufficiently coherent information environments to support trustworthy outputs at scale.[2]

The issue is not necessarily the absence of data. Modern enterprises often possess enormous quantities of information. The problem is structural integrity. A single customer may exist under multiple identifiers across separate systems. Healthcare providers may maintain inconsistent patient records between departments. Insurance organizations frequently inherit incompatible taxonomies through acquisition activity. Government agencies often operate across fragmented legacy infrastructure that evolved independently over decades. Even basic metadata standards are frequently inconsistent across operational units.

These conditions create severe downstream consequences for AI deployment. Machine learning systems are highly effective at identifying statistical relationships within data, but they do not inherently determine whether those relationships are operationally trustworthy, contextually complete, temporally accurate, or procedurally admissible. If the informational substrate is unstable, the outputs generated from that substrate become unstable as well.

This is one reason many enterprise AI projects initially appear successful during pilot stages but encounter major operational failures during scaling. Pilot environments are typically curated, simplified, and tightly controlled. Scaling introduces real-world enterprise entropy: conflicting workflows, inconsistent governance structures, incomplete metadata, duplicated records, legacy integrations, and human operational variation.

The AI model is rarely the only point of failure. More often, the model becomes the mechanism through which hidden organizational disorder is exposed.

Data quality and the illusion of confidence

One of the most significant operational risks associated with modern generative AI systems is that they often fail persuasively rather than visibly. Traditional enterprise software systems usually exhibit recognizable failure states. A database query crashes. A transaction fails. An integration breaks. An application produces an explicit error.

Large language models and related AI systems behave differently. They frequently generate fluent, coherent, and professionally structured outputs even when the underlying information quality is severely compromised.[3]This creates a dangerous perception of reliability because organizations may interpret linguistic sophistication as evidence of accuracy.

The Stanford Center for Research on Foundation Models has repeatedly documented that large language models can produce highly convincing outputs that nonetheless contain fabricated information, incorrect references, or structurally unsupported conclusions.[4] The challenge becomes even more severe when enterprise data environments already contain inconsistencies, omissions, or contextual fragmentation.

Under these conditions, AI systems can unintentionally create what some enterprise governance specialists describe as “confidence amplification.” Weak assumptions embedded inside fragmented operational systems become transformed into outputs that appear analytically rigorous despite resting on unstable informational foundations.

This problem extends beyond hallucinations. Context degradation is equally important. Even technically correct information may become operationally misleading when provenance, temporal sequence, authorization boundaries, or workflow context are lost during ingestion or transformation processes. A financial metric without accounting context may distort risk interpretation. A medical recommendation generated from incomplete patient history may introduce clinical exposure. A law enforcement analysis derived from inconsistent classification standards may compromise evidentiary integrity.

The issue is therefore not merely whether the model generated an answer. The issue is whether the organization can trust the informational conditions under which that answer was produced.

The hidden economics of data remediation

One of the least publicly discussed aspects of enterprise AI deployment is the enormous operational cost associated with data preparation and remediation. While public attention tends to focus on graphics processing units (GPUs), infrastructure investment, and model licensing, many organizations discover that the majority of implementation effort is spent addressing informational inconsistency rather than building AI functionality itself.

Recent enterprise AI implementation research from McKinsey & Company, The State of AI and Harvard Business Review, Building the AI-Powered Organization indicates that workflow redesign, governance alignment, organizational restructuring, and data remediation consume a substantial percentage of enterprise AI deployment timelines and budgets.[5] [6]

This work is expensive because it requires both technical expertise and operational understanding.

Data remediation frequently involves resolving duplicated entities, repairing historical corruption, standardizing taxonomies, reconstructing lineage, validating metadata integrity, reconciling inconsistent workflows, and establishing governance accountability structures across departments that may have operated independently for years.

In some organizations, the discovery process itself becomes destabilizing. AI deployment efforts reveal that different departments rely upon contradictory operational assumptions without realizing that the inconsistencies exist. Finance may classify data differently from operations. Regional offices may maintain incompatible reporting standards. Legacy workflows may persist unofficially through spreadsheets and manual reconciliation practices invisible to central governance teams.

This explains why many AI projects evolve into organizational transformation exercises rather than purely technical deployments. The challenge is not simply teaching machines to process information. It is determining whether the enterprise itself possesses coherent operational truth structures.

Synthetic contamination and recursive instability

An additional challenge is beginning to emerge across the broader information ecosystem: synthetic contamination. As generative AI systems increasingly produce internet content, organizations are unknowingly ingesting AI-generated material back into future training and retrieval pipelines.[7]

This phenomenon introduces recursive instability into information environments. Synthetic text influences future synthetic text generation. AI-generated imagery trains future imaging systems. Generated code becomes embedded in future software repositories. Over time, distinguishing original verified information from recursively generated artifacts becomes increasingly difficult.

Researchers publishing in Nature, AI Models Collapse When Trained on Recursively Generated Data have warned that repeated exposure to synthetic training data may contribute to forms of “model collapse”, where informational diversity gradually degrades and statistical representations become increasingly distorted.[8]

While the long-term implications remain under investigation, the trajectory raises serious governance concerns for enterprises dependent upon trusted informational systems.

Future challenges may therefore extend beyond traditional data quality management. Organizations may increasingly require mechanisms capable of verifying provenance, authenticity, lineage, and contextual integrity across mixed human and synthetic information environments.

This is particularly important in high-consequence sectors where evidentiary integrity, regulatory accountability, and operational reconstructability are essential.

Why governance is becoming more important than model size

For much of the modern AI cycle, industry competition focused primarily on capability expansion. Larger models, larger context windows, faster inference, broader multimodal functionality, and increasing automation-dominated strategic narratives.

Enterprise priorities are beginning to shift.

Increasingly, organizations are asking governance-centered questions rather than purely performance-centered questions:

- Can outputs be trusted?

- Can workflows be reconstructed?

- Can authorization boundaries be enforced?

- Can assumptions be audited?

- Can decision states be explained retrospectively?

- Can information lineage be verified?

These questions are becoming central within emerging regulatory frameworks. The European Union Artificial Intelligence Act places increasing emphasis on transparency, traceability, documentation, risk management, and governance obligations for high-risk AI systems.[9]The NIST AI Risk Management Framework 1.0 similarly emphasizes accountability, monitoring, and trustworthy system design.[10]

This represents a significant market transition. Enterprise value may depend less on raw generative capability and more upon operational trustworthiness.

In other words, the competitive advantage may no longer belong exclusively to organizations with the largest models. It may belong to organizations capable of maintaining the most reliable operational truth structures.

Human expertise remains the stabilizing layer

Despite aggressive automation narratives, experienced human operators remain essential in enterprise environments precisely because they continue to function as contextual correction systems. Humans possess situational awareness, domain expertise, ethical interpretation capabilities, operational skepticism, and cross-disciplinary reasoning abilities that remain difficult to replicate through probabilistic systems.

An experienced healthcare practitioner recognizes contextual nuance that may never appear fully inside structured clinical data. A veteran police investigator identifies inconsistencies invisible to statistical pattern matching systems. Finance professionals understand regulatory implications extending beyond numerical outputs. Emergency management personnel evaluate environmental variables that historical datasets may only partially represent.

AI systems excel at processing large-scale informational relationships. Human operators remain responsible for determining whether those relationships are operationally meaningful, contextually valid, and procedurally appropriate.

The future of enterprise AI therefore appears less likely to involve complete human replacement and more likely to involve disciplined human-AI operational collaboration supported by strong governance boundaries and trusted information systems.

Conclusion

The modern AI industry has invested enormous energy in discussions surrounding model scale, computational performance, and generative capability. Yet across enterprise environments, a different reality is becoming increasingly visible. The primary limiting factor for trustworthy AI deployment is often not the sophistication of the model, but the integrity of the informational environment upon which the model depends.

Organizations deploying AI into fragmented, weakly governed, or operationally inconsistent data environments may inadvertently amplify instability rather than intelligence. Fluency can obscure corruption. Automation can accelerate hidden errors. Confidence can become detached from informational validity.

This is why data quality can no longer be treated as a secondary technical issue delegated quietly to IT departments. It has become a central governance, operational, and strategic concern.

The organizations most likely to succeed in the next phase of enterprise AI adoption may not be those possessing the loudest marketing narratives or the largest infrastructure budgets. They are more likely to be organizations capable of maintaining trusted lineage, coherent workflows, contextual integrity, governance discipline, and reconstructable operational truth structures.

AI ultimately amplifies whatever informational conditions already exist inside the enterprise.

If those conditions are strong, AI can become a powerful operational multiplier.

If they are weak, AI may simply industrialize disorder.

References

[1] McKinsey & Company, The State of AI: How Organizations Are Rewiring to Capture Value

[2]Harvard Business Review, Building the AI-Powered Organization

[3] IBM, What Is Data Quality?

[4] Stanford Center for Research on Foundation Models

[5] McKinsey Global Survey: The State of AI

[6] Harvard Business Review, Building the AI-Powered Organization

[7] OECD AI Policy Observatory

[8] Nature, AI Models Collapse When Trained on Recursively Generated Data

[9] European Union Artificial Intelligence Act

[10] NIST AI Risk Management Framework 1.0

(Mark Jennings-Bates – BIG Media Ltd., 2026)

![16th May: No Good Deed (2002), 1hr 37m [R] (5.7/10) 16th May: No Good Deed (2002), 1hr 37m [R] (5.7/10)](https://occ-0-953-999.1.nflxso.net/dnm/api/v6/0Qzqdxw-HG1AiOKLWWPsFOUDA2E/AAAABUnnbBNFxMuSYE8cM6ugp75Bu_IxsopfxjN4syNFxnBQGUD65heyVkz9wo17v_LGvbiT0ifMtFrpQtTfhZxjYPoErUoV-kuCN8yy.jpg?r=b50)